When it comes to learning, humans and artificial intelligence (AI) systems share a common challenge: how to forget information they shouldn’t know. For rapidly evolving AI programs, especially those trained on vast datasets, this issue becomes critical. Imagine an AI model that inadvertently generates content using copyrighted material or violent imagery – such situations can lead to legal complications and ethical concerns.

Researchers at The University of Texas at Austin have tackled this problem head-on by applying a groundbreaking concept: machine “unlearning.” In their recent study, a team of scientists led by Radu Marculescu have developed a method that allows generative AI models to selectively forget problematic content without discarding the entire knowledge base.

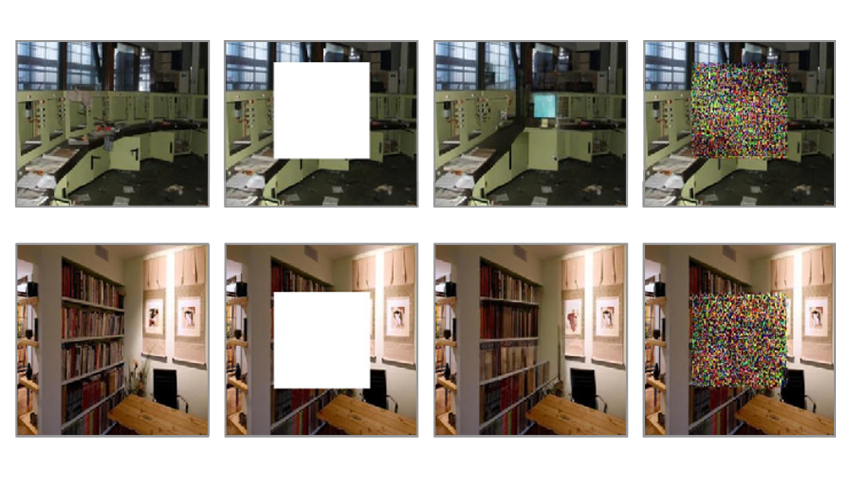

At the core of their research are image-to-image models, capable of transforming input images based on contextual instructions. The novel machine “unlearning” algorithm equips these models with the ability to expunge flagged content without undergoing extensive retraining. Human moderators oversee content removal, providing an additional layer of oversight and responsiveness to user feedback.

While machine unlearning has traditionally been applied to classification models, its adaptation to generative models represents a nascent frontier. Generative models, especially those dealing with image processing, present unique challenges. Unlike classifiers that make discrete decisions, generative models create rich, continuous outputs. Ensuring that they unlearn specific aspects without compromising their creative abilities is a delicate balancing act.

As the next step the scientists plan to explore applicability to other modality, especially for text-to-image models. Researchers also intend to develop some more practical benchmarks related to the control of created contents and protect the data privacy.

You can read the full study in the paper published on the arXiv preprint server.

As AI continues to evolve, the concept of machine “unlearning” will play an increasingly vital role. It empowers AI systems to navigate the fine line between knowledge retention and responsible content generation. By incorporating human oversight and selectively forgetting problematic content, we move closer to AI models that learn, adapt, and respect legal and ethical boundaries.