There are two kinds of AI. They are polar opposites. You need to Understand the difference.

Most people, most journalists, and most YouTube influencers do not know the difference. This leads to continued public conflation of the two kinds of AI in all the popular media. The difference is enormous, yet invisible to most of us, because we rarely examine reality at this level. And indeed, it doesn’t matter to most people.

But it matters greatly to anyone involved with AI at any level. Sadly, most people working in AI are still ignorant about these matters, which may well lead to Cognitive Dissonance about their work. They don’t understand why the systems they are building can work.

There was an AI revolution in 2012. “Deep Learning” outclassed decades of slow progress in multiple disciplines by double digit margins on performance. The switch from 20th to 21st Century AI only took a few years.

AI theory had (for good reasons) initially set off in the wrong direction in 1955… and had since been lost in the wrong desert. For 57 years.

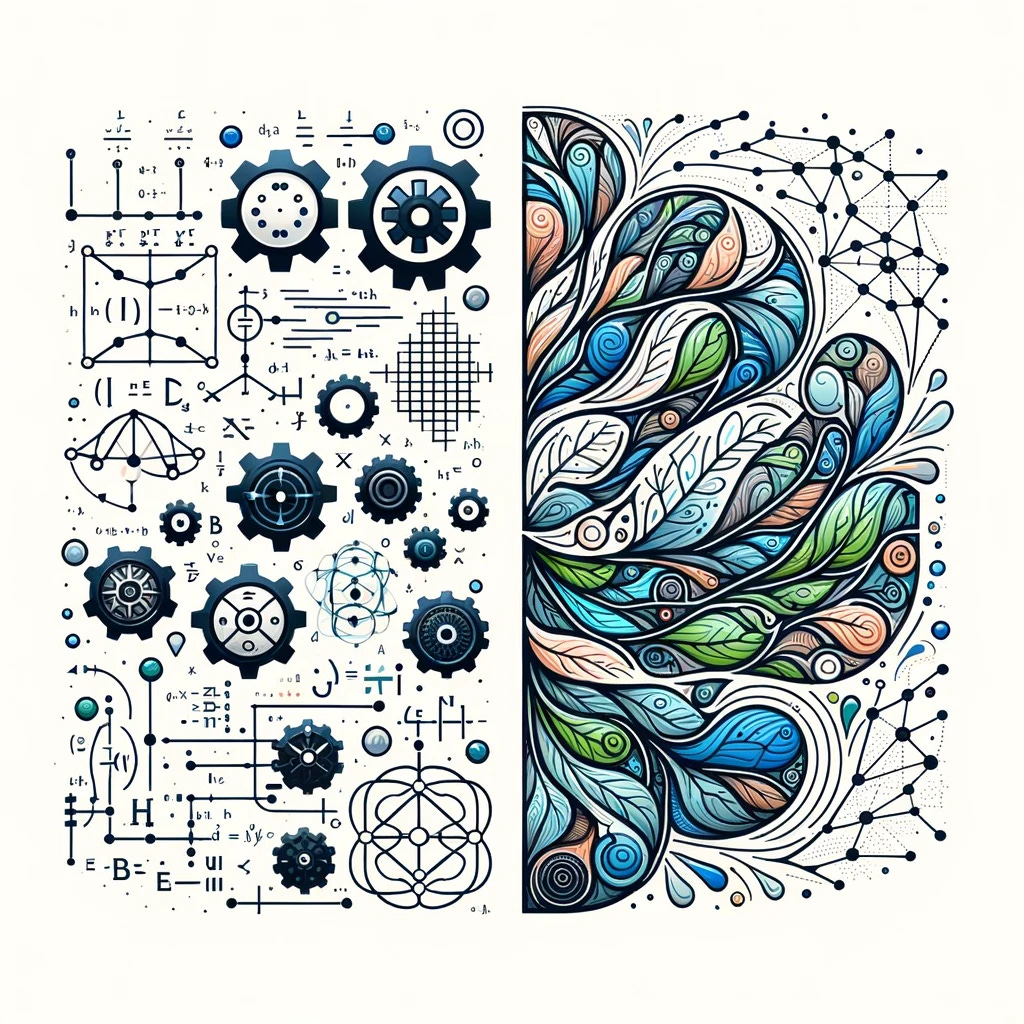

To outsiders, it looked like “AI just started working”. In reality, we replaced 20th Century Programming, Logic, and Reasoning based AI with its polar opposite – with Deep Neural Networks and Machine Learning.

To anyone reading about these ideas for the first time, brace yourself. If you were educated in any Science, Technology, Engineering, and Math (STEM) discipline, then you need to realize that your entire education and lifetime experience is working against you when you try to Understand these ideas.

I also have a STEM education, but I was lucky – I managed to slowly figure this out over 18 months from summer 1999 to January 1, 2001, because by sheer luck, I was well primed. What I have to say is not hard to understand. Many people, especially biologists, can get it in mere minutes. And once you get it, the way you see the world will change.

When this happens, about 1% of people (often AI researchers) will show shock-level responses hinting at major reorganizations of their world understanding happening in their brains… in seconds. It can be ugly. This is a radical change at the level of a religious conversion. It is painful to watch, like an electrical panel that is short-circuiting.

You have been warned. Onward. We need three scary-looking but precisely defined words to describe the difference.

Philosophy can be seen as the base level of our thinking. At the philosophy level, we do not even have Science. Philosophical truths tend to be simple and mostly obvious. There are no proofs. Good theories are coherent, consistent, match real world phenomena, and if useful, will provide a foundation for other systems. Such as Science.

The top (and most visible) part of Philosophy is Epistemology. Epistemology is the part of Philosophy that interfaces to the Real World, and is the correct and only place to discuss fundamental concepts like truth, falsehood, knowledge, understanding, reasoning, abstraction, learning, and problem solving.

I have solid understanding of all these concepts, but we can simply focus on problem solving. Because this is where we find the conflict. The Chasm.

At the top level it looks easy:

Hard problems require planning and reasoning.

Simple problems don’t.

We all use both kinds of problem solving daily. And the shock comes from realizing the following things:

-

If we look at all the problems a brain solves in a day, and define “problem”

appropriately, then we find we are using planning a lot less than we think. Each step we take requires the brain to sequence the leg muscles in the right cadence. Every sentence we speak requires formulating language. We understand language effortlessly. We understand what we are looking at with our eyes. These are “problems” we solve millions of times per hour without any planning or reasoning whatsoever. -

More complicated problems require planning. Our STEM education teaches us how to split a big problem into smaller problems and to delegate subproblems to different teams.

-

STEM methods provide a number of advantages: Optimality, Completeness, Repeatability, Parsimony, Explainability, and Extrapolation, to name a few. The list is long. In some sense, they define science by enumerating its benefits.

-

STEM style methods succeed when all remaining problems match simple (and note: Context Free) equations we know from STEM textbooks, We are effectively building a Model of the problem in an abstract space, and we can solve all subModels using our STEM equations and our data.

-

But it turns out that entire classes of problems resist these scientific methods. Some problems cannot be reduced to smaller problems. And to the chagrin of scientists everywhere, the group of “irreducible” problems contains Language Understanding, Learning, and Abstraction Discovery.

It turns out all “AI Level Problems” are irreducible. From a Scientific POV, that’s what makes them “AI Level Problems” in the first place. -

A STEM education is mostly useless for these AI Level Problems.

If you don’t believe this, consider going to college to learn about how to build AI systems. You learn Linear Algebra, Data Science, Linguistics, Tensorflow, etc. But after graduation, your first job will be Prompt Engineering.

These are the proper names, as used in Epistemology, for the two styles of problem solving. To many, these words have lots of baggage to the point where they refuse to use them. The refusal to use words like “Holism” and “Holistic” already hints that these people have built up a memetic immune defense against these ideas… Because if true, then their mostly Reductionist STEM education would be mostly irrelevant. So they make Holism into a dirty word that cannot be discussed in academia.

My own definitions for these terms are compatible with common definitions found in the literature but they are the most precise you will ever see:

Reductionism is the Use of Models

Holism is the Avoidance of Models.

Models are Simplifications of Reality. F=ma is a Model. If you know m and a, you can compute F. But Science itself doesn’t tell you where to find m and a. You need to just know, from experience.

Models are Scientific Models, Theories, Hypotheses, Conjectures, Equations, Formulas, and most computer programs written before the 2012 Revolution.

And Superstitions. They are Simplifications of Reality to popular, personal, or private theories, to models of how the world works, even if they may well be incorrect.

Many people think of Holism as “The Whole”, as in everything. This is not incorrect. More important for problem solving is to think of it as “Not Reduced” or “Not simplified to equations” and instead solved in the problem domain itself.

The most common reason Science fails in certain problem domains is complexity of the domain. The world in general, the global economy, the stock market, the connections in the brain, and cellular biology are all overwhelmingly complex.

Understanding vision, hearing, and human languages are also problem domains that science has not been able to model well. Human brains can handle these, seemingly without effort.

And now, Holistic Methods in computers can also understand those same things.

On top of that, they are doing other wonderful things, like protein folding, which reminds me that there is a rather sharp dividing line between the kinds of problems Reductionist Methods can solve and problems it cannot. It was discussed by Erwin Schrödinger in 1948:

Chemistry is largely Reductionist. Models in chemistry accurately predict any chemical reactions.

Biochemistry is much more Holistic. Protein Folding frustrated STEM methods for decades. Holistic AI has provided us with entire catalogs of folded proteins with known functionality and can even design proteins that never evolved on Earth that will still be very valuable to medicine and materials.

To a Physicist, Life is on the other side of the Complexity Barrier.

To a Biologist, Physics is for simple problems.

Journalists and YouTube influencers should take note: The very things you all love to talk about (because it generates clicks) are part of the 20th Century AI Mythology that we need to eradicate.

Reductionist AI scaremongers told us about evil AIs that would be so smart they would take over the world, and, at the same time, be so stupid that they would not know when to stop making paperclips.

AI certainly has many kinds of risks, including AI supported power escalations by evil humans, but they all need to be re-evaluated in light of the 2012 Revolution. I have done my part: See AI Alignment Is Trivial .

Concepts like AGI and The Turing Test are also obsolete and are discussed in other substack posts. And Large Language Models are technically not Models, because they, like language, are irreducible. Bah. I’m not fighting that one.

Science started forming around 1550 and we can recognize what some people did in 1650 as Science. Reductionist Science peaked with the Moon Shot. I need to emphasize this:

Reductionism is the greatest invention our species has ever made

But Reductionism only works where there are Scientists and Engineers that can handle the Holistic (Understanding) part. The parts that require “Epistemic Reduction”, the making of Models. Which means we are (largely) limited by human brain size to the size and complexity of problems we can attack. The Reductionist train is running out of track. As a species, we are ready to move to the next stage – beyond Science. Ironically, back to pre-scientific Holistic methods.

Humans are not General Intelligences

at birth.

We are General Learners.

We have lived for thousands of years without Science and are still solving almost all of our daily problems Holistically, without planning or reasoning, by making decent guesses based on scant available information, a very rich context, and a lifetime of experience.

But now we can do it with computer support.

The AI Revolution of 2012 was exactly this: We finally figured out how to make our inherently Reductionist computers “just do it” – To jump to conclusions on scant evidence. The key was, of course, Learning. The technologies behind Deep Learning, Transformers, LLMs and all future AI system are (and will forever be) based on Holistic principles. This was unthinkable to most people working on 20th Century AI, and is a very tough pill to swallow for anyone that believes in Science as the only way forward for our civilization.

Most of the remaining hard problems that Science has been unable to solve are problems where human understanding is limited by domain complexity. Just consider politics.

Species level problems require a Holistic Stance

I have decades of experience in both kinds of AI which makes me an expert on the difference. In 1998 I experienced firsthand the limitations of Reductionist Methods for AI, and almost left the field in disgust, but by 2001 I had identified it as a problem in Epistemology. I started over from scratch, appropriately on January 1, 2001. By 2004 I had created several experimental LLMs and I knew that Deep Neural Networks were the path forward. By 2006 I started evangelizing about “Model Free Methods” (initially caving to prejudice against the word “Holistic”) in talks at my AI MeetUp and on the first of several web sites.

Today I can offer a choice of paths that anyone involved in AI in any way can use to learn about these fundamental differences and their very surprising repercussions. My main research publishing website is Experimental Epistemology. The gentle path is to read everything starting from Chapter 1. The brutal path is to start with The Red Pill (Chapter 7) which is self-contained but aimed at an audience that is ready to tackle the topic head-on.

Note links at top of page. Most videos are on Vimeo. Substack is important.

This post is the shortest introduction I can make to my results of 23 years of LLM and Epistemology research. The Red Pill is the best summary I have. But the repercussions of these ideas stretch far and wide, and into many aspects of life outside of AI. These ideas are both new and interesting. I want to continue exploring them. To this end I am planning to revive my AI MeetUp group. Note the new cool name. If you want to hear more about these things, join the group, or follow me on Facebook.

“The Matrix” movie used a red pill to initiate an eye-opening shift in the way we view Reality; hence the title of my post. My Trigger Warning page looks like a joke to many people, but if you can absorb My Red Pill, you will see the world very differently. If you are involved with AI at all, then understanding the difference is critical to both your work and your mental health.

Good Luck.